GutenOCR is a family of vision-language models (VLMs) designed to serve as a “grounded OCR front-end”. Unlike traditional OCR pipelines (which are brittle) or modern “OCR-free” VLMs (which often lack precise token-to-pixel alignment), GutenOCR is fine-tuned to provide both high-quality text transcription and explicit geometric grounding (bounding boxes) through a unified, prompt-based interface.

Abstract

Traditional OCR pipelines are often brittle, while modern “OCR-free” Vision-Language Models (VLMs) frequently lack precise token-to-pixel alignment. To address this, we introduce GutenOCR, a family of VLMs designed specifically as a “grounded OCR front-end.” By fine-tuning Qwen2.5-VL on a curriculum of synthetic and real-world documents, GutenOCR provides both high-quality text transcription and explicit geometric grounding (bounding boxes) through a unified, prompt-based interface. This approach allows downstream systems to request exactly the data format they need, from plain text to complex JSON structures.

Key Contributions & Results

- Unified Interface: Transforms Qwen2.5-VL models into specialized OCR systems supporting full-page reading, detection, localized reading, and conditional detection via prompting.

- In-Domain Improvements: GutenOCR-7B more than doubles the composite grounded OCR score of its base model (0.40 to 0.82) on 10.5K held-out pages, showing massive gains in localized reading and detection.

- Fox Benchmark: Significantly outperforms baselines on region-level and line-level OCR, with GutenOCR-3B achieving a region-level Character Error Rate (CER) of 0.053, surpassing even the dedicated Fox model.

- Curriculum Learning: Training uses a three-stage curriculum across synthetic data, real-world business documents, and long-context scientific articles to progressively build layout and grounding competency.

- Trade-offs: While GutenOCR reads content accurately (high Page F1), it orders text based on 2D layout columns. It also experiences some catastrophic forgetting of color-based prompts and slight degradation in math formula recognition.

Methodology

- Data: The training mixture combines large-scale real-world documents (business forms, scientific articles) with synthetic data designed to teach precise grounding (e.g., “Grounded LaTeX” and “SynthDoG Grounding”).

- Curriculum Learning: Training progresses through three stages, starting with short contexts and synthetic data, moving to real-world business documents, and finishing with long-context scientific articles (up to 16k tokens).

- Unified Interface: The model treats “pipeline” stages (detection, reading, grounding) as different input-output schemas of a single model, allowing downstream systems to request exactly the data format they need (e.g., plain text vs. JSON boxes).

Models

We release 3B and 7B parameter models on HuggingFace:

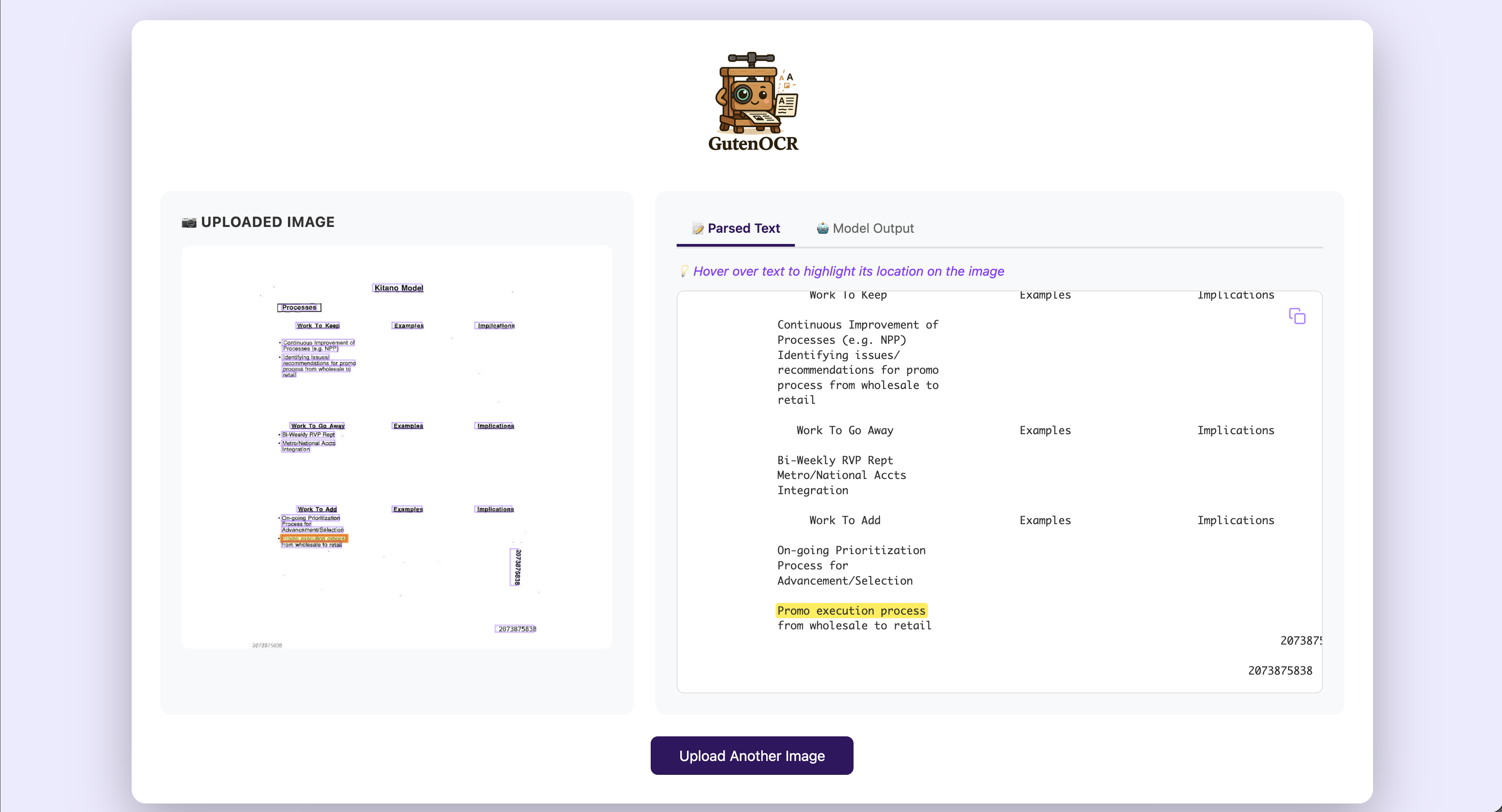

You can try GutenOCR directly at ocr.roots.ai, where you can upload a document image and see the model’s parsed text output alongside bounding-box highlights on the original image.

Why This Matters

GutenOCR is proposed as a foundational layer for systems where every extracted answer must be explicitly linked to supporting pixels. By providing stable, grounded outputs, it enables human-in-the-loop workflows where reviewers can easily verify hallucinations or missing text by checking the predicted bounding boxes. This work pairs closely with our release of PubMed-OCR, which provides the large-scale, high-density annotations necessary to train such layout-aware models.

Resources

- Live Demo: Try GutenOCR on your own documents.

- Paper (arXiv): Full technical report.

- Code (GitHub): Training code and model release.

- GutenOCR-3B (HuggingFace): 3B parameter model weights.

- GutenOCR-7B (HuggingFace): 7B parameter model weights.

Citation

@misc{heidenreich2026gutenocrgroundedvisionlanguagefrontend,

title={GutenOCR: A Grounded Vision-Language Front-End for Documents},

author={Hunter Heidenreich and Ben Elliott and Olivia Dinica and Yosheb Getachew},

year={2026},

eprint={2601.14490},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2601.14490},

}

Related Work

- PubMed-OCR: The large-scale annotation dataset used to train GutenOCR’s layout-aware grounding capabilities.

- LLMs for Page Stream Segmentation: Complementary work on document understanding at the page-stream level.

- The Evolution of Page Stream Segmentation: Rules to LLMs: Background on the history and evolution of document processing pipelines.

- The Reliability Trap: When 99% Accuracy Isn’t Enough: Explores calibration challenges in deployed PSS systems, directly relevant to GutenOCR’s deployment context as an OCR front-end.