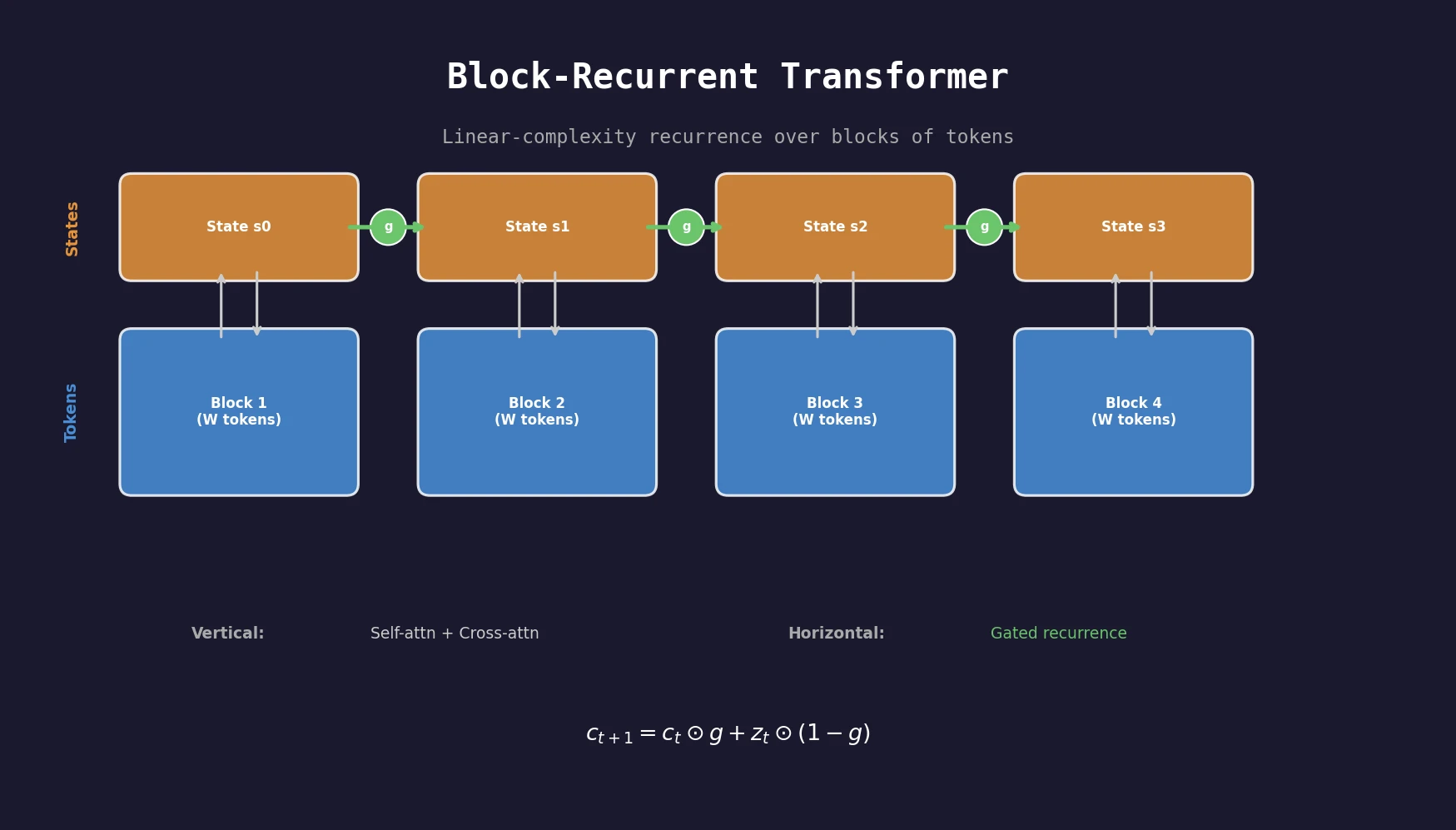

Block-Recurrent Transformers for Long Sequences

A transformer architecture that applies a recurrent cell over blocks of tokens, achieving linear complexity in sequence length while outperforming Transformer-XL baselines on PG19, arXiv, and GitHub datasets.